Responding to Deepfakes in Social Engineering Attacks

Deepfake videos, audio, and images are proliferating, and they keep getting better. People are using them in all sorts of ways, including creating parodies, reviving long-deceased historical figures, delivering lectures, and de-aging actors in motion pictures.

Unfortunately, deepfake technology has also paved the way for malicious activities like deceptive marketing campaigns, identity fraud, and political misinformation.

According to Datambit, there has been an 82% increase in deepfake incidents.

One of the most concerning deepfake threats is social engineering.

Traditional social engineering attacks alone are already worrisome. But once you add deepfakes to the mix, they become even more deceptive, potent, and destructive.

In this article, we’ll share key information that can help you defend against deepfake threats and the consequences that accompany them. First, let’s clarify what we mean by a “deepfake.”

What are Deepfakes?

Deepfakes are AI-generated synthetic media that replicate a person’s likeness in videos, audio, or images.

For example, a video featuring Tom Cruise, but the person isn’t actually Tom. Or an audio of someone who sounds like President Donald Trump saying something that the real Donald Trump never said.

The most notable deepfake generation technology is the Generative Adversarial Network (GAN). GANs consist of two neural networks (which process data like the human brain) that compete with one another.

One network, the generator, generates fake content, such as images and videos. The other network, the discriminator, evaluates the generated content and compares it against real content to determine authenticity. This feedback loop goes on and on, improving over time.

Eventually, the generator’s output becomes indistinguishable from the real thing.

How Deepfakes are Used in Social Engineering

Because deepfakes are so believable, cybercriminals now use them in social engineering campaigns. Here are two examples.

Enhanced phishing attacks

Some people prefer to send voice or video notes instead of text. While these methods of communication used to be challenging to fabricate, they can now be artificially reconstructed and modified through deepfake technology.

Assuming they are able to hijack a victim’s communications account (e.g., the victim’s email or instant messaging account), attackers can then attach a deepfake video or audio recording impersonating the victim.

Suppose the victim is a CEO. In that case, they can send a fake recording to the finance or accounting department, declaring a fabricated emergency and asking that funds be wire transferred to an account under their control.

Celebrity impersonation in advertising scams

Scammers now use deepfakes of celebrities and other popular figures in ads to dupe fans and the general public into purchasing a product or service. Some of those ads may even be harmful. If you click on them, you could end up downloading malware.

Notable Real-World Examples

Here are some real-world examples of deepfakes in action.

Fake Taylor Swift endorsement

In 2024, McAfee issued a warning about a deepfake ad that showed Taylor Swift promoting Le Creuset Cookware. In reality, the person in the video ad wasn’t Taylor Swift, nor was it even a real person.

$25 million transfer after a multi-person video conference

Also in 2024, a finance worker was tricked into transmitting $25 million to fraudsters after a seemingly real multi-person video conference. The others who participated in the conference looked and sounded like the victim’s colleagues.

As it turned out, they were all deepfake clones.

The finance worker was initially suspicious after receiving what was purportedly a message from the company’s UK-based CFO requesting that the fund transfer be done in secret. However, after unknowingly meeting with the deepfake clones, the finance worker was convinced the request was authentic.

Deepfake Detection: How to Identify a Deepfake Social Engineering Attack

Considering the rise of elaborate AI social engineering attacks like the ones above, it’s critical to distinguish deepfakes from the real deal. But how? You can find some telltale signs—at least for now.

- Audio/video synchronization issues – Most deepfakes are still incapable of synchronizing speech with lip movement, especially in real-time video calls. If the lips and voice of the person you’re talking to don’t seem to be in sync, consider that a red flag.

- Unusual vocal elements – If the person speaking sounds robotic, makes unnatural pauses, or speaks in mismatched tones, that can be a sign you’re speaking to a deepfake.

- Avoidance of real-time interaction – If something feels off to you, ask the person improvised questions or to change their camera angle. Deepfakes rely on pre-generated content and have trouble improvising. So, if you make those requests, they’ll likely come up with various reasons to avoid fulfilling them.

More importantly, look for the hallmark of traditional social engineering attacks: a sense of urgency coupled with an unusual request. The presence of this combination alone should set off alarm bells, and you should start getting suspicious.

How to Respond to a Deepfake Attack

So, let’s say you’ve identified a potential deepfake attack. What do you do?

- Calm down and resist urgency – Social engineering attacks thrive on urgency to bypass critical thinking. Thus, it's important to stay calm and assess the situation, especially if the request is framed as urgent or time-sensitive.

- Verify the other party's identity through alternative channels – Confirm the other party's identity using a different, trusted method, such as a phone call, email, or, better yet, in-person confirmation. Avoid relying solely on the video or audio you’re interacting with.

- Report the incident – If you’re working for an organization and deem it necessary, report the matter to your supervisor or IT department. They may be able to recommend a better course of action.

Preventive Measures Against Deepfake-based Social Engineering

AI is here to stay, and deepfake attacks will only become more realistic. In many cases, you can be more effective in minimizing the risk of a deepfake social engineering attack if you emphasize prevention.

Here are some ways to prevent deepfake attacks.

-

Use multi-factor authentication (MFA) – MFA won’t stop all deepfake attacks, but it can be effective against some. Let’s say you’re the company CFO, and a threat actor uses a deepfake replica of yourself. That replica then requests your IT admin to reset and share your email account password.

Even with the new password, a second factor of authentication, such as a time-based one-time password (TOTP), will prevent unauthorized access. - Adopt and promote security awareness – Cultivate a mindset that promotes vigilance and encourages members of your organization to embrace it. If you’re in a leadership position, introduce security awareness trainings and cover cybersecurity against deepfakes.

- Use deepfake detection solutions – Fortunately, AI-powered cybersecurity can be used to counter deepfake threats. You can check out the following deepfake detection tools:

- Datambit

- DeepWare

- Sensity AI

- Microsoft Video Authenticator

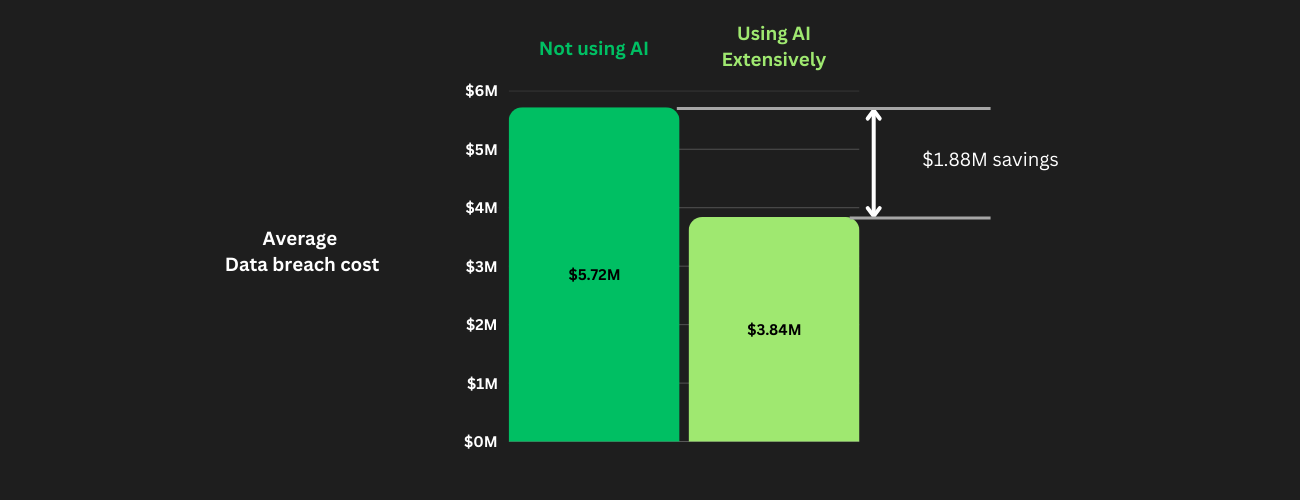

AI in cybersecurity has proven to be pivotal in business environments. According to the Cost of a Data Breach Report, organizations that leveraged AI and automation heavily in their security operations saved an average of $1.88 million in data breach costs.

In addition, those organizations also managed to identify and contain data breaches nearly 100 days faster on average than organizations that didn’t use AI and automation.

Chart created by OffGrid, data sourced from Cost of a Data Breach Report 2024

Chart created by OffGrid, data sourced from Cost of a Data Breach Report 2024

Notable Trends in Deepfake Technology and Social Engineering

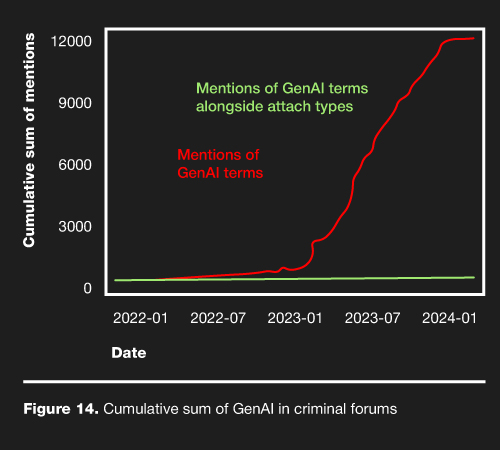

Although they didn’t explicitly mention “deepfake,” researchers behind the Verizon Data Breach Investigations Report observed a rapidly growing occurrence of generative AI (GenAI) terms alongside attack types in cybercrime forums, as seen in the chart below.

Chart created by OffGrid, data sourced from Verizon 2024 Data Breach Investigations Report

Given that deepfakes are a product of GenAI and their use in attacks is already widespread, we can anticipate a significant rise in deepfake-related incidents over time.

Conclusion

Deepfake technology shows the potential and risks of artificial intelligence. While deepfakes have opened doors to creative expression and innovation, their misuse in social engineering attacks threatens individuals and organizations.

The examples shared of deepfake-driven scams and fraudulent endorsements highlight the vulnerabilities of synthetic media.

Technology alone isn’t enough to address deepfake threats. It requires a combination of AI detection tools, good cybersecurity practices, and a culture of heightened awareness. As deepfakes increase in sophistication, defenses will evolve in tandem.

Security training, multi-layered authentication systems, and investments in AI-powered solutions play a significant role in managing risks for organizations.

Beyond technology and protocols, questioning the authenticity of what we see and hear, especially when faced with urgent or unusual requests, is crucial. By staying informed, vigilant, and proactive, you can mitigate the risks of deepfake-driven social engineering.